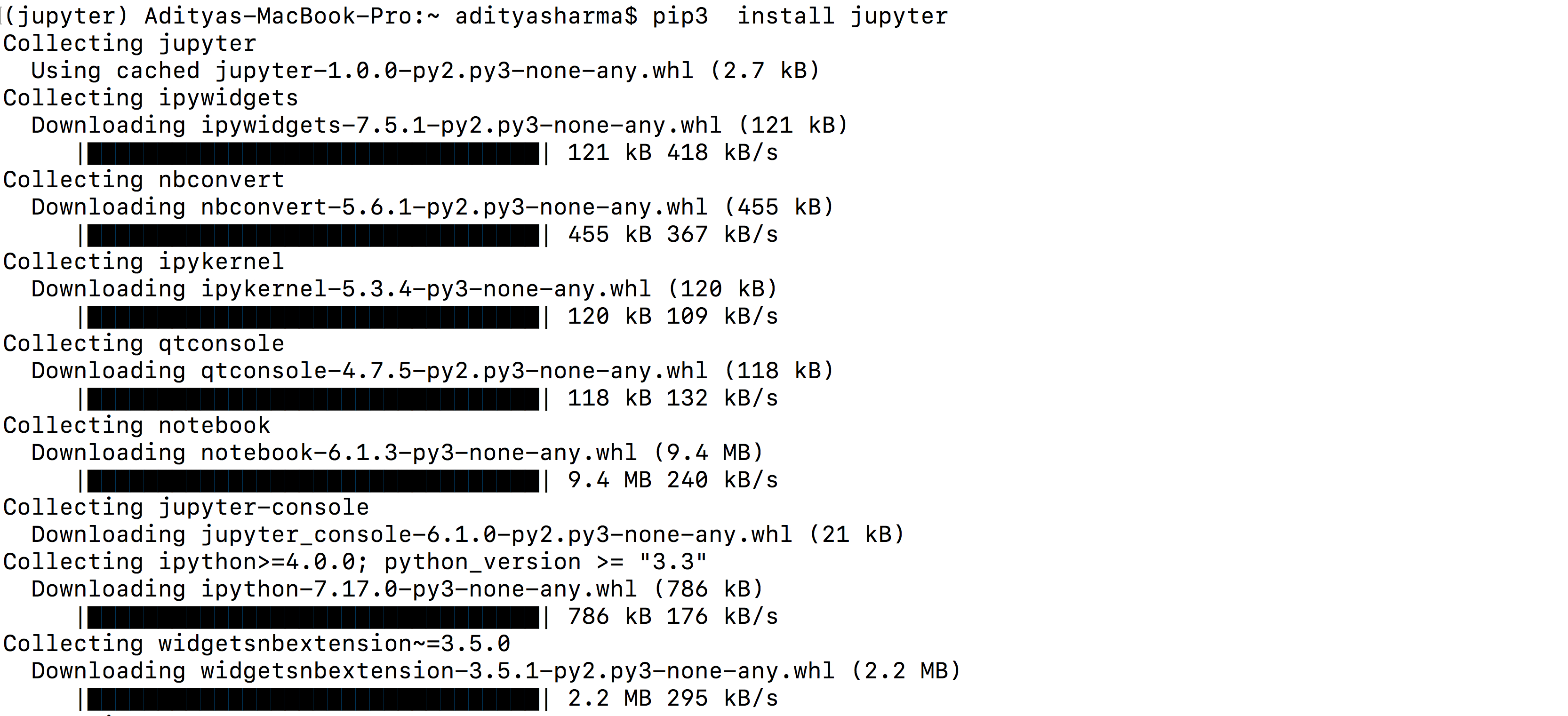

etc.).įundamentally the problem is usually rooted in the fact that the Jupyter kernels are disconnected from Jupyter's shell in other words, the installer points to a different Python version than is being used in the notebook. this, that, here, there, another, this one, that one, and this. This issue is a perrennial source of StackOverflow questions (e.g.

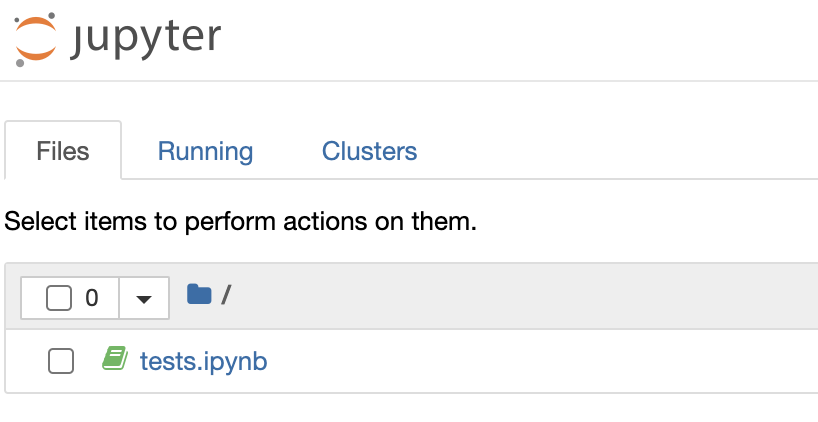

I installed package X and now I can't import it in the notebook. I most often see this manifest itself with the following issue: You only need to make sure you’re inside your pipenv environment.In software, it's said that all abstractions are leaky, and this is true for the Jupyter notebook as it is for any other software. To start Pyspark and open up Jupyter, you can simply run $ pyspark. Now you save the file, and source your Terminal: Your ~/.bashrc or ~/.zshrc should now have a section that looks kinda like this: 172 # Sparkġ79 export PYSPARK_DRIVER_PYTHON_OPTS='notebook'ġ80 export PYSPARK_PYTHON=python3 # only if you're using Python 3 If you want to use Python 3 with Pyspark (see step 3 above), you also need to add: export PYSPARK_PYTHON=python3 Now tell Pyspark to use Jupyter: in your ~/.bashrc/ ~/.zshrc file, add export PYSPARK_DRIVER_PYTHON=jupyterĮxport PYSPARK_DRIVER_PYTHON_OPTS='notebook'

Set Spark variables in your ~/.bashrc/ ~/.zshrc file # Spark So depending on your version of macOS, you need to do one of the following: Until macOS 10.14 the default shell used in the Terminal app was bash, but from 10.15 on it is Zshell ( zsh). What’s happening here? By creating a symbolic link to our specific version (2.4.3) we can have multiple versions installed in parallel and only need to adjust the symlink to work with them. $ sudo mv spark-2.4.3-bin-hadoop2.7 /opt/spark-2.4.3Ĭreate a symbolic link (symlink) to your Spark version With the pre-requisites in place, you can now install Apache Spark on your Mac.ĭownload the newest version, a file ending in. The original guides I’m working from are here, here and here. While dipping my toes into the water I noticed that all the guides I could find online weren’t entirely transparent, so I’ve tried to compile the steps I actually did to get this up and running here. So, we’ll stick to Pyspark in this guide.

How to install jupyter notebook on macbook how to#

You can also use Spark with R and Scala, among others, but I have no experience with how to set that up. is a bit of a hassle to just learn the basics though (although Amazon EMR or Databricks make that quite easy, and you can even build your own Raspberry Pi cluster if you want…), so getting Spark and Pyspark running on your local machine seems like a better idea. Setting up your own cluster, administering it etc. Whether it’s for social science, marketing, business intelligence or something else, the number of times data analysis benefits from heavy duty parallelization is growing all the time.Īpache Spark is an awesome platform for big data analysis, so getting to know how it works and how to use it is probably a good idea.